The image is built, scanned, and pushed. The version tag is v0.1.0-alpha.7.

And yet… nothing is running in the cluster.

That’s the deployment gap that catches a lot of people off guard. CI/CD ends with a container registry entry. The cluster doesn’t care about that — it needs a manifest, a chart, a reconciliation loop. The jump from “artifact in a registry” to “pod running in production” is exactly where the 4-repo GitOps model earns its keep.

This is the post where I close that loop.

Back in January I published The Four-Repo GitOps Structure for My Homelab Platform as a conceptual overview of the architecture. Since then, every post in the Self-Hosting the Blog series has been about the left side of the delivery pipeline: containerising the site, versioning the image, building security gates. Now we’re crossing to the right side - Kubernetes deployment - and I’m going to walk through exactly what it took to wire all four repositories together to serve the blog.

What Changed Across the Four Repositories#

Before diving into the why, here’s the summary of what actually landed in each repository this week:

| Repository | Key Changes |

|---|---|

homelab-k8s-argo-config | Added web namespace; fixed ExternalSecret base64 encoding for 1Password credentials |

homelab-k8s-base-manifests | Added Hugo library template + deployable chart (v0.1.0) |

homelab-k8s-environments | Added blog_hugo version file for dev (v0.1.0-alpha.7); created prod path placeholder |

homelab-k8s-environments-apps | Built the full App-of-Apps structure: root app, web domain root, blog_hugo Application, runtime values |

Let me go through each layer in the same order that Argo CD resolves them.

Layer 1 — Platform Prerequisites in homelab-k8s-argo-config#

The argo-config repository owns everything the platform needs before workloads can land. For the blog deployment, two things were missing.

Adding a Namespace#

Platform namespaces are declarative in base/namespaces/namespace.yaml. Adding web was a one-liner, but it’s the right place to track it:

- apiVersion: v1

kind: Namespace

metadata:

name: webIn an enterprise environment, namespace creation is often a cross-team ceremony. Keeping it in the platform repository (not in the app chart) means it’s provisioned once, before any workload arrives, and stays anchored to the platform lifecycle — not the application lifecycle. Kustomize overlays for dev and prod can inherit this and add namespace-level policies on top.

Fixing the ExternalSecret Base64 Encoding#

1Password Connect credentials land in the cluster through an ExternalSecret. When I first set it up, the raw value was being injected directly into the Kubernetes Secret. The problem: the target Secret field expects a base64-encoded JSON credential file, and ExternalSecrets was writing the raw string.

The fix is a template block with engineVersion: v2:

target:

template:

engineVersion: v2

data:

1password-credentials.json: |-

{{ "{{ .credentials | b64enc }}" }}The b64enc pipe runs inside the ExternalSecrets template engine, encoding the remote reference value before it writes it into the Secret. This is one of those “obvious in hindsight” issues — the container was crashing with a base64-decode error, which is a non-trivial signal to chase when you’re unfamiliar with how ExternalSecrets processes values.

Layer 2 — The Helm Chart in homelab-k8s-base-manifests#

This repository is the chart source. For workloads, I use a library template pattern: one generic Helm library chart provides reusable named templates, and a thin deployable chart wraps them.

The Library Template#

The library chart lives at templates/microservices-template/template-hugo/v0.1.0/. It defines named templates for all standard Kubernetes resources:

_deployment.yaml → homelab.deployment-hugo

_service.yaml → homelab.service-hugo

_probes.yaml → liveness and readiness probe definitions

_env_config_map.yaml → ConfigMap from values.envConf

_env_external_secret.yaml → ExternalSecret from values.externalSecretsConfig

_labels.yaml → standard label set

_microservice.yaml → calls all of the above

_variables.yaml → shared variable resolutionThe deployable chart at charts/microservices/hugo/v0.1.0/ is intentionally thin:

# Chart.yaml

apiVersion: v2

name: hugo

version: 0.1.0

dependencies:

- name: template-hugo

version: "0.1.0"

repository: "file://../../../../templates/microservices-template/template-hugo/v0.1.0"And a single template call:

# templates/hugo_static_website.yaml

{{ include "homelab.microservice" . }}This pattern mirrors how enterprise platform teams ship Helm: a blessed template library that enforces standards (label conventions, probe patterns, resource defaults), and thin consumer charts per workload type. App teams get a values API, not a Kubernetes API.

Layer 3 — The Version Registry in homelab-k8s-environments#

This repository holds one thing per deployed app: the image version. That’s it.

# environments/dev/web/blog_hugo/values.yaml

version: v0.1.0-alpha.7The production path exists as a placeholder (environments/prod/.gitkeep) but holds no version yet — promotion to prod is a deliberate pull request, not an automated step.

The strict ownership rule here is worth emphasising. In every Argo CD multi-source Application in this setup, the values from this registry are loaded after the runtime values from homelab-k8s-environments-apps. Because later values win in Helm merge order:

sources:

- ref: valuesRepo # environments-apps (runtime)

- ref: valuesRepoDefault # environments (version) ← wins on overlapping keysIf you ever accidentally put runtime config (replicas, resources) in the version registry, it silently overrides the runtime values. Keeping the version registry strictly version-only prevents that class of bug entirely.

In a production fintech setup I’ve run, a similar discipline was enforced by a separate team owning the version registry with read-only access from app teams. Here in the homelab it’s enforced by convention, but the same logic applies.

Layer 4 — App Definitions and Runtime Values in homelab-k8s-environments-apps#

This is the largest change set, and the most structurally interesting one. It introduces the full App-of-Apps hierarchy for the dev environment.

The App-of-Apps Chain#

The pattern builds a hierarchy of Argo CD Applications, each pointing to the next level:

root-homelab-dev (bootstrap entry point)

└── root-app/

└── root-web-dev (web domain root)

└── web/

└── blog_hugo.yaml (actual workload Application)Step by step:

1. Bootstrap root — root-homelab-dev.yaml

apiVersion: argoproj.io/v1alpha1

kind: Application

metadata:

name: root-homelab-dev

namespace: argocd

spec:

source:

path: environments/dev/_root/root-app

repoURL: https://github.com/anvaplus/homelab-k8s-environments-apps

targetRevision: HEAD

project: argo-config

syncPolicy:

automated:

prune: true

selfHeal: trueThis is what you apply once to bootstrap. After that, Argo CD picks up everything else from the repo automatically.

2. Root app group — root-app/ is a Kustomize directory

# root-app/kustomization.yaml

resources:

- root-web.yaml3. Domain root — root-web.yaml points Argo CD at the web/ domain folder, which contains its own Kustomization listing all web Applications:

# web/kustomization.yaml

resources:

- blog_hugo/blog_hugo.yamlThis indirection pays off when the second app lands. Adding homelab_hugo to the web domain means adding one line to this file and dropping in two new files. Nothing higher in the chain changes.

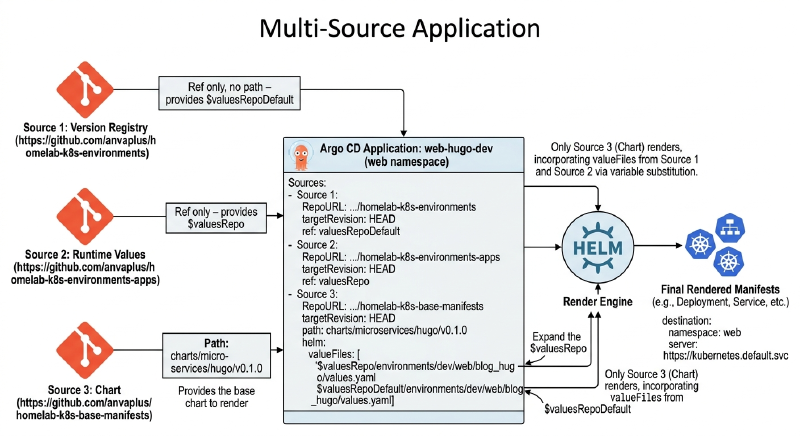

The Multi-Source Application#

The workload Application is where the three value sources converge:

# web/blog_hugo/blog_hugo.yaml

apiVersion: argoproj.io/v1alpha1

kind: Application

metadata:

name: web-hugo-dev

namespace: argocd

finalizers:

- resources-finalizer.argocd.argoproj.io

spec:

destination:

namespace: web

server: https://kubernetes.default.svc

project: argo-config

sources:

# Source 1: version registry (ref only, no path — provides $valuesRepoDefault)

- repoURL: https://github.com/anvaplus/homelab-k8s-environments

targetRevision: HEAD

ref: valuesRepoDefault

# Source 2: runtime values (ref only — provides $valuesRepo)

- repoURL: https://github.com/anvaplus/homelab-k8s-environments-apps

targetRevision: HEAD

ref: valuesRepo

# Source 3: chart (path + valueFiles — this is what renders)

- repoURL: https://github.com/anvaplus/homelab-k8s-base-manifests

targetRevision: HEAD

path: charts/microservices/hugo/v0.1.0

helm:

valueFiles:

- $valuesRepo/environments/dev/web/blog_hugo/values.yaml

- $valuesRepoDefault/environments/dev/web/blog_hugo/values.yaml

syncPolicy:

automated:

prune: true

selfHeal: trueThe ref: sources are just repository anchors — they expose their content as $valuesRepo and $valuesRepoDefault variables referenced in valueFiles. Only the third source (with path:) contains the chart that renders.

This is one of the most powerful Argo CD features that I see underused. Standard single-source Applications can’t separate “what version” from “how configured” — you end up with environment-specific chart forks or complex Kustomize overlays. Multi-source solves this cleanly.

Runtime Values#

The values.yaml in this repository owns everything that isn’t the version:

# web/blog_hugo/values.yaml

appName: web

componentName: hugo

namespace: web

environment: dev

deployment:

spec:

replicas: 1

image:

repository: anvaplus/hugo-blog-example

pullPolicy: IfNotPresent

resources:

requests:

cpu: 100m

memory: 128Mi

limits:

cpu: 300m

memory: 256MiNo version key here. That’s intentional… it lives only in homelab-k8s-environments. Any future automation that bumps the version (CI/CD writing to the version registry) doesn’t touch this file, and any runtime tuning (scaling replicas, adjusting resource limits) doesn’t touch the version registry.

How It All Connects#

At reconciliation time, Argo CD visits this Application and:

- Fetches all three sources at

HEAD - Resolves

$valuesRepoand$valuesRepoDefaultvariables - Builds the Helm release from the chart, layering values in order: runtime values first, then version values on top

- Applies the rendered manifests to the

webnamespace in the cluster

The final rendered objects for the blog_hugo Application include:

- A

Deploymentwith a single replica, imageanvaplus/hugo-blog-example:v0.1.0-alpha.7 - A

Serviceof typeClusterIPon port 80 targeting 8080 - A

ConfigMapfor environment variables (Hugo base URL, language, environment) - Optionally, an

ExternalSecretifexternalSecretsConfigis populated in values

The web namespace already exists (provisioned by argo-config), the image has passed security gates (built by the CI pipeline), and Argo CD simply matches desired state to actual state continuously.

The Loop Is Closed#

Here’s the complete flow now, end to end:

Git push (blog content change)

→ GitHub Actions PR validation (Gitleaks, Trivy, SonarCloud)

→ Merge to main

→ GitHub Actions deployment (multi-arch build, tag v0.1.0-alpha.7)

→ Image pushed to registry

→ CI writes version to homelab-k8s-environments (dev path)

→ Argo CD detects version change

→ Argo CD reconciles blog_hugo Application

→ Pod restarts with new image tag

→ Blog is liveEvery step is automated and auditable. The image hash that passed security scanning is the same hash running in the cluster. The version that CI calculated is the version committed to Git and tracked in the environment registry.

This mirrors how I’ve seen compliant delivery pipelines run in regulated environments — the difference is that here the entire infrastructure fits in a spare homelab box and costs nothing beyond electricity.

What’s Next#

The platform now runs the blog. The next logical step is connecting the actual deployment to the real domain — wiring up Traefik ingress and TLS certificates so blog.dev.thebestpractice.tech (link public available after the next post) resolves to the pod. We’ve already built the networking layer in the Path to Automated TLS series… now it’s time to use it for the workload.

Stay tuned. Andrei